By Shradha

AI Assist Research

Governed Decision-Support for Enterprise Research

Context & Challenge

Enterprise researchers working in policy, regulatory, and compliance environments often navigate multiple dashboards, repositories, and lengthy documents to answer a single question. The process is fragmented, cognitively heavy, and difficult to defend later - especially in environments where insight without evidence carries operational and legal risk.

Enterprise research had three major challenges:

Why not Search or Chatbot

Traditional keyword search retrieves only documents.

Conversational AI answers questions.

AI Assist grounds, structures, and governs responses — enabling accountable enterprise decisions.

Core Features

Cross-Functional Collaboration

Translating stakeholder needs into technical requirements.

Confidence level & Guardrails

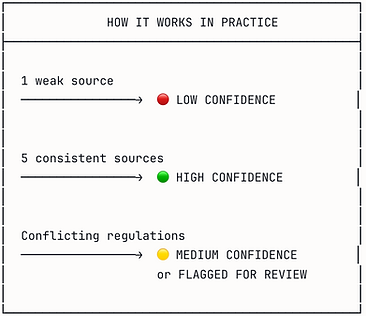

And these confidence levels and clarifications are not random and not 'AI self-belief.'

Confidence levels were designed in collaboration with the AI/ML team by translating retrieval scores, source agreement, and coverage depth into understandable UX signals.

Retrieval boundaries were defined based on approved data sources, jurisdiction filters, and minimum relevance thresholds.

Process Map & System Architecture

I translated these inputs into a five-phase process map, with each phase designed around specific user needs and technical capabilities we could reliably deliver.

Impact

Key Learnings

Validation & Testing

I led the user validation plan for multiple rounds of testing for different features and overall workflow and also reviews with content strategy, accessibility, and engineering to confirm feasibility and compliance.

Testing Methods

-

A/B testing for hierarchy

-

Usability and comprehension testing

-

AI interaction testing

-

WCAG accessibility audits

What we tested :

-

Navigation

-

Section layouts

-

Task flows

-

AI features

-

Personalization relevance

-

Cross-platform behavior

Handover & Build Support

I owned the full handoff process, ensuring engineering had clarity, documentation, and interaction logic for overall flow.

Key highlights -

-

Authored annotated specs for complex flows

-

Led engineering and leadership walkthroughs

-

Defined a unified interaction model for consistency

-

Supported development and QA cycles through launch

Final work :

Mobile app :

Earlier experience

New experience

Website :

Earlier experience

New experience

Impact (Measured Outcomes)

Customer & Platform Impact -

-

36% faster task completion

-

+22% retention on Profile & Settings

-

+4.2-point NPS increase

-

Significant reduction in negative feedback

Business Impact -

-

Reduced support dependency

-

Increased engagement with benefits and memberships

-

Scalable IA and governance reducing future design/engineering overhead

-

Platform foundation for AI and personalization

Key Learnings

-

IA requires systems thinking - Navigation affects every downstream experience.

-

Alignment is continuous - not a phase - Cross-functional clarity is critical for complex programs.

-

Governance is essential for scale - Patterns allow consistency + speed across multiple teams.

-

Personalization = empathy - Users value relevance, not generic “smart” content.

-

Leadership is about enabling others - By creating tools, patterns, and clarity, teams move faster together.